12 Portfolio backtesting

In this section, we introduce the notations and framework that will be used when analyzing and comparing investment strategies. Portfolio backtesting is often conceived and perceived as a quest to find the best strategy - or at least a solidly profitable one. When carried out thoroughly, this possibly long endeavor may entice the layman to confuse a fluke for a robust policy. Two papers published back-to-back warn against the perils of data snooping, which is related to \(p\)-hacking. In both cases, the researcher will torture the data until the sought result is found.

Fabozzi and Prado (2018) acknowledge that only strategies that work make it to the public, while thousands (at least) have been tested. Picking the pleasing outlier (the only strategy that seemed to work) is likely to generate disappointment when switching to real trading. In a similar vein, R. Arnott, Harvey, and Markowitz (2019) provide a list of principles and safeguards that any analyst should follow to avoid any type of error when backtesting strategies. The worst type is arguably false positives whereby strategies are found (often by cherrypicking) to outperform in one very particular setting, but will likely fail in live implementation.

In addition to these recommendations on portfolio constructions, R. Arnott et al. (2019) also warn against the hazards of blindly investing in smart beta products related to academic factors. Plainly, expectations should not be set too high or face the risk of being disappointed. Another takeaway from their article is that economic cycles have a strong impact on factor returns: correlations change quickly and drawdowns can be magnified in times of major downturns.

Backtesting is more complicated than it seems and it is easy to make small mistakes that lead to apparently good portfolio policies. This chapter lays out a rigorous approach to this exercise, discusses a few caveats, and proposes a lengthy example.

12.1 Setting the protocol

We consider a dataset with three dimensions: time \(t=1,\dots,T\), assets \(n=1,\dots,N\) and characteristics \(k=1,\dots,K\). One of these attributes must be the price of asset \(n\) at time \(t\), which we will denote \(p_{t,n}\). From that, the computation of the arithmetic return is straightforward (\(r_{t,n}=p_{t,n}/p_{t-1,n}-1\)) and so is any heuristic measure of profitability. For simplicity, we assume that time points are equidistant or uniform, i.e., that \(t\) is the index of a trading day or of a month for example. If each point in time \(t\) has data available for all assets, then this makes a dataset with \(I=T\times N\) rows.

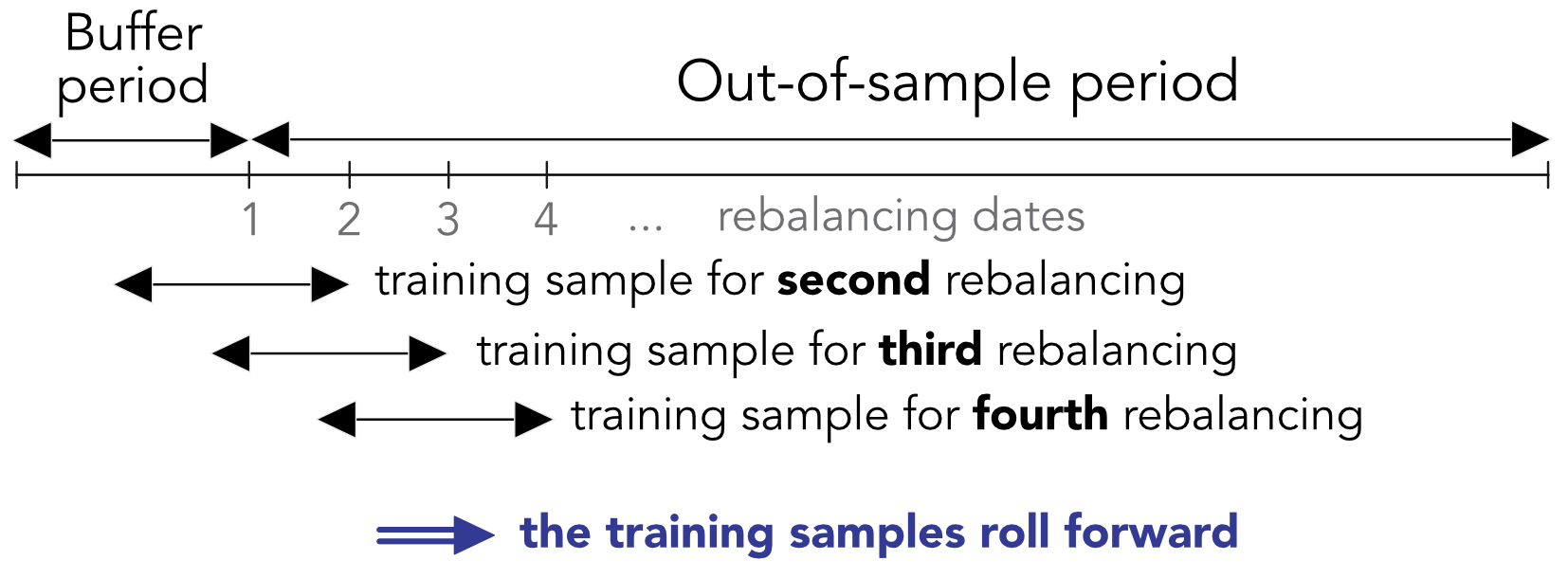

The dataset is first split in two: the out-of-sample period and the initial buffer period. The buffer period is required to train the models for the first portfolio composition. This period is determined by the size of the training sample. There are two options for this size: fixed (usually equal to 2 to 10 years) and expanding. In the first case, the training sample will roll over time, taking into account only the most recent data. In the second case, models are built on all of the available data, the size of which increases with time. This last option can create problems because the first dates of the backtest are based on much smaller amounts of information compared to the last dates. Moreover, there is an ongoing debate on whether including the full history of returns and characteristics is advantageous or not. Proponents argue that this allows models to see many different market conditions. Opponents make the case that old data is by definition outdated and thus useless and possibly misleading because it won’t reflect current or future short-term fluctuations.

Henceforth, we choose the rolling period option for the training sample, as depicted in Figure 12.1.

FIGURE 12.1: Backtesting with rolling windows. The training set of the first period is simply the buffer period.

Two crucial design choices are the rebalancing frequency and the horizon at which the label is computed. It is not obvious that they should be equal but their choice should make sense. It can seem right to train on a 12-month forward label (which captures longer trends) and invest monthly or quarterly. However, it seems odd to do the opposite and train on short-term movements (monthly) and invest at a long horizon.

These choices have a direct impact on how the backtest is carried out. If we note:

- \(\Delta_h\) for the holding period between 2 rebalancing dates (in days or months);

- \(\Delta_s\) for the size of the desired training sample (in days or months - not taking the number of assets into consideration);

- \(\Delta_l\) for the horizon at which the label is computed (in days or months),

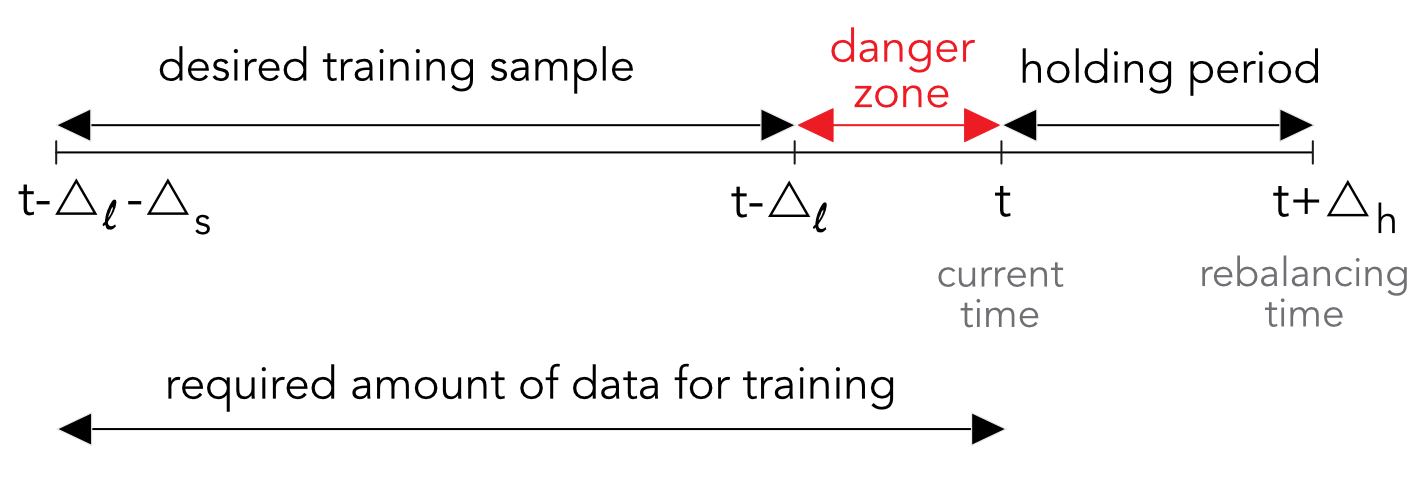

then the total length of the training sample should be \(\Delta_s+\Delta_l\). Indeed, at any moment \(t\), the training sample should stop at \(t-\Delta_l\) so that the last point corresponds to a label that is calculated until time \(t\). This is highlighted in Figure 12.2 in the form of the red danger zone. We call it the red zone because any observation which has a time index \(s\) inside the interval \((t-\Delta_l,t]\) will engender a forward looking bias. Indeed if a feature is indexed by \(s \in (t-\Delta_l,t]\), then by definition, the label covers the period \([s,s+\Delta_l]\) with \(s+\Delta_l>t\). At time \(t\), this requires knowledge of the future and is naturally not realistic.

FIGURE 12.2: The subtleties in rolling training samples.

12.2 Turning signals into portfolio weights

The predictive tools outlined in Chapters 5 to 11 are only meant to provide a signal that is expected to give some information on the future profitability of assets. There are many ways that this signal can be integrated in an investment decision (see Snow (2020) for ways to integrate ML tools into this task).

First and foremost, there are at least two steps in the portfolio construction process and the signal can be used at any of these stages. Relying on the signal for both steps puts a lot of emphasis on the predictions and should only be considered when the level of confidence in the forecasts is high.

The first step is selection. While a forecasting exercise can be carried out on a large number of assets, it is not compulsory to invest in all of these assets. In fact, for long-only portfolios, it would make sense to take advantage of the signal to exclude those assets that are presumably likely to underperform in the future. Often, portfolio policies have fixed sizes that impose a constant number of assets. One heuristic way to exploit the signal is to select the assets that have the most favorable predictions and to discard the others. This naive idea is often used in the asset pricing literature: portfolios are formed according to the quantiles of underlying characteristics and some characteristics are deemed interesting if the corresponding sorted portfolios exhibit very different profitabilities (e.g., high average return for high quantiles versus low average return for low quantiles).

This is for instance an efficient way to test the relevance of the signal. If \(Q\) portfolios \(q=1,\dots,Q\) are formed according to the rankings of the assets with respect to the signal, then one would expect that the out-of-sample performance of the portfolios be monotonic with \(q\). While a rigorous test of monotonicity would require to account for all portfolios (see, e.g., Romano and Wolf (2013)), it is often only assumed that the extreme portfolios suffice. If the difference between portfolio number 1 and portfolio number \(Q\) is substantial, then the signal is valuable. Whenever the investor is able to short assets, this amounts to a dollar neutral strategy.

The second step is weighting. If the selection process relied on the signal, then a simple weighting scheme is often a good idea. Equally weighted portfolios are known to be hard to beat (see DeMiguel, Garlappi, and Uppal (2009)), especially compared to their cap-weighted alternative, as is shown in Plyakha, Uppal, and Vilkov (2016). More advanced schemes include equal risk contributions (Maillard, Roncalli, and Teiletche (2010)) and constrained minimum variance (Coqueret (2015)). Both only rely on the covariance matrix of the assets and not on any proxy for the vector of expected returns.

For the sake of completeness, we explicitize a generalization of Coqueret (2015) which is a generic constrained quadratic program: \[\begin{equation} \tag{12.1} \underset{\textbf{w}}{\text{min}} \ \frac{\lambda}{2} \textbf{w}'\boldsymbol{\Sigma}\textbf{w}-\textbf{w}'\boldsymbol{\mu} , \quad \text{s.t.} \quad \begin{array}{ll} \textbf{w}'\textbf{1}=1, \\ (\textbf{w}-\textbf{w}_-)'\boldsymbol{\Lambda}(\textbf{w}-\textbf{w}_-) \le \delta_R,\\ \textbf{w}'\textbf{w} \le \delta_D, \end{array} \end{equation}\]

where it is easy to recognize the usual mean-variance optimization in the left-hand side. We impose three constraints on the right-hand side.23 The first one is the budget constraint (weights sum to one). The second one penalizes variations in weights (compared to the current allocation, \(\textbf{w}_-\)) via a diagonal matrix \(\boldsymbol{\Lambda}\) that penalizes trading costs. This is a crucial point. Portfolios are rarely constructed from scratch and are most of the time adjustments from existing positions. In order to reduce the orders and the corresponding transaction costs, it is possible to penalize large variations from the existing portfolio. In the above program, the current weights are written \(\textbf{w}_-\) and the desired ones \(\textbf{w}\) so that \(\textbf{w}-\textbf{w}_-\) is the vector of deviations from the current positions. The term \((\textbf{w}-\textbf{w}_-)'\boldsymbol{\Lambda}(\textbf{w}-\textbf{w}_-)\) is an expression that characterizes the sum of squared deviations, weighted by the diagonal coefficients \(\Lambda_{n,n}\). This can be helpful because some assets may be more costly to trade due to liquidity (large cap stocks are more liquid and their trading costs are lower). When \(\delta_R\) decreases, the rotation is reduced because weights are not allowed too deviate too much from \(\textbf{w}_-\). The last constraint enforces diversification via the Herfindhal-Hirschmann index of the portfolio: the smaller \(\delta_D\), the more diversified the portfolio.

Recalling that there are \(N\) assets in the universe, the Lagrange form of (12.1) is:

\[\begin{equation} \tag{12.2} L(\textbf{w})= \frac{\lambda}{2} \textbf{w}'\boldsymbol{\Sigma}\textbf{w}-\textbf{w}'\boldsymbol{\mu}-\eta (\textbf{w}'\textbf{1}_N-1)+\kappa_R ( (\textbf{w}-\textbf{w}_-)'\boldsymbol{\Lambda}(\textbf{w}-\textbf{w}_-) - \delta_R)+\kappa_D(\textbf{w}'\textbf{w}-\delta_D), \end{equation}\]

and the first order condition \[\frac{\partial}{\partial \textbf{w}}L(\textbf{w})= \lambda \boldsymbol{\Sigma}\textbf{w}-\boldsymbol{\mu}-\eta\textbf{1}_N+2\kappa_R \boldsymbol{\Lambda}(\textbf{w}-\textbf{w}_-)+2\kappa_D\textbf{w}=0,\] yields \[\begin{equation} \tag{12.3} \textbf{w}^*_\kappa= (\lambda \boldsymbol{\Sigma}+2\kappa_R \boldsymbol{\Lambda} +2\kappa_D\textbf{I}_N)^{-1} \left(\boldsymbol{\mu} + \eta_{\lambda,\kappa_R,\kappa_D} \textbf{1}_N+2\kappa_R \boldsymbol{\Lambda}\textbf{w}_-\right), \end{equation}\] with \[\eta_{\lambda,\kappa_R,\kappa_D}=\frac{1- \textbf{1}_N'(\lambda\boldsymbol{\Sigma}+2\kappa_R \boldsymbol{\Lambda}+2\kappa_D\textbf{I}_N)^{-1}(\boldsymbol{\mu}+2\kappa_R\boldsymbol{\Lambda}\textbf{w}_-)}{\textbf{1}'_N(\lambda \boldsymbol{\Sigma}+2\kappa_R \boldsymbol{\Lambda}+2\kappa_D\textbf{I}_N)^{-1}\textbf{1}_N}.\]

This parameter ensures that the budget constraint is satisfied. The optimal weights in (12.3) depend on three tuning parameters: \(\lambda\), \(\kappa_R\) and \(\kappa_D\).

- When \(\lambda\) is large, the focus is set more on risk reduction than on profit maximization (which is often a good idea given that risk is easier to predict);

- When \(\kappa_R\) is large, the importance of transaction costs in (12.2) is high and thus, in the limit when \(\kappa_R \rightarrow \infty\), the optimal weights are equal to the old ones \(\textbf{w}_-\) (for finite values of the other parameters).

- When \(\kappa_D\) is large, the portfolio is more diversified and (all other things equal) when \(\kappa_D \rightarrow \infty\), the weights are all equal (to \(1/N\)).

- When \(\kappa_R=\kappa_D=0\), we recover the classical mean-variance weights which are a mix between the maximum Sharpe ratio portfolio proportional to \((\boldsymbol{\Sigma})^{-1} \boldsymbol{\mu}\) and the minimum variance portfolio proportional to \((\boldsymbol{\Sigma})^{-1} \textbf{1}_N\).

This seemingly complex formula is in fact very flexible and tractable. It requires some tests and adjustments before finding realistic values for \(\lambda\), \(\kappa_R\) and \(\kappa_D\) (see exercise at the end of the chapter). In Pedersen, Babu, and Levine (2020), the authors recommend a similar form, except that the covariance matrix is shrunk towards the diagonal matrix of sample variances and the expected returns are mix between a signal and an anchor portfolio. The authors argue that their general formulation has links with robust optimization (see also W. C. Kim, Kim, and Fabozzi (2014)), Bayesian inference (Lai et al. (2011)), matrix denoising via random matrix theory, and, naturally, shrinkage. In fact, shrunk expected returns have been around for quite some time (Jorion (1985), Kan and Zhou (2007) and Bodnar, Parolya, and Schmid (2013)) and simply seek to diversify and reduce estimation risk.

12.3 Performance metrics

The evaluation of performance is a key stage in a backtest. This section, while not exhaustive, is intended to cover the most important facets of portfolio assessment.

12.3.1 Discussion

While the evaluation of the accuracy of ML tools (See Section 10.1) is of course valuable (and imperative!), the portfolio returns are the ultimate yardstick during a backtest. One essential element in such an exercise is a benchmark because raw and absolute metrics don’t mean much on their own.

This is not only true at the portfolio level, but also at the ML engine level. In most of the trials of the previous chapters, the MSE of the models on the testing set revolves around 0.037. An interesting figure is the variance of one-month returns on this set, which corresponds to the error made by a constant prediction of 0 all the time. This figure is equal to 0.037, which means that the sophisticated algorithms don’t really improve on a naive heuristic. This benchmark is the one used in the out-of-sample \(R^2\) of Gu, Kelly, and Xiu (2020b).

In portfolio choice, the most elementary allocation is the uniform one, whereby each asset receives the same weight. This seemingly simplistic solution is in fact an incredible benchmark, one that is hard to beat consistently (see DeMiguel, Garlappi, and Uppal (2009) and Plyakha, Uppal, and Vilkov (2016)). Theoretically, uniform portfolios are optimal when uncertainty, ambiguity or estimation risk is high (Pflug, Pichler, and Wozabal (2012), Maillet, Tokpavi, and Vaucher (2015), L. Zhao and Gao (2022)) and empirically, it cannot be outperformed even at the factor level (Dichtl, Drobetz, and Wendt (2020)). Below, we will pick an equally weighted (EW) portfolio of all stocks as our benchmark.

12.3.2 Pure performance and risk indicators

We then turn to the definition of the usual metrics used both by practitioners and academics alike. Henceforth, we write \(r^P=(r_t^P)_{1\le t\le T}\) and \(r^B=(r_t^B)_{1\le t\le T}\) for the returns of the portfolio and those of the benchmark, respectively. When referring to some generic returns, we simply write \(r_t\). There are many ways to analyze them and most of them rely on their distribution.

The simplest indicator is the average return: \[\bar{r}_P=\mu_P=\mathbb{E}[r^P]\approx \frac{1}{T}\sum_{t=1}^T r_t^P, \quad \bar{r}_B=\mu_B=\mathbb{E}[r^B]\approx \frac{1}{T}\sum_{t=1}^T r_t^B,\]

where, obviously, the portfolio is noteworthy if \(\mathbb{E}[r^P]>\mathbb{E}[r^B]\). Note that we use the arithmetic average above but the geometric one is also an option, in which case: \[\tilde{\mu}_P\approx \left(\prod_{t=1}^T(1+r^P_t) \right)^{1/T}-1 , \quad \tilde{\mu}_B \approx \left(\prod_{t=1}^T(1+r^B_t) \right)^{1/T}-1.\] The benefit of this second definition is that it takes the compounding of returns into account and hence compensates for volatility pumping. To see this, consider a very simple two-period model with returns \(-r\) and \(+r\). The arithmetic average is zero, but the geometric one \(\sqrt{1-r^2}-1\) is negative.

Akin to accuracy, it ratios evaluate the proportion of times when the position is in the right direction (long when the realized return is positive and short when it is negative). Hence hit ratios evaluate the propensity to make good guesses. This can be computed at the asset level (the proportion of positions in the correct direction24) or at the portfolio level. In all cases, the computation can be performed on raw returns or on relative returns (e.g., compared to a benchmark). A meaningful hit ratio is the proportion of times that a strategy beats its benchmark. This is of course not sufficient, as many small gains can be offset by a few large losses.

Lastly, one important precision. In all examples of supervised learning tools in the book, we compared the hit ratios to 0.5. This is in fact wrong because if an investor is bullish, he or she may always bet on upward moves. In this case, the hit ratio is the percentage of time that returns are positive. Over the long run, this probability is above 0.5. In our sample, it is equal to 0.556, which is well above 0.5. This could be viewed as a benchmark to be surpassed.

Pure performance measures are almost always accompanied by risk measures. The second moment of returns is usually used to quantify the magnitude of fluctuations of the portfolio. A large variance implies sizable movements in returns, and hence in portfolio values. This is why the standard deviation of returns is called the volatility of the portfolio. \[\sigma^2_P=\mathbb{V}[r^P]\approx \frac{1}{T-1}\sum_{t=1}^T (r_t^P-\mu_P)^2, \quad \sigma^2_B=\mathbb{V}[r^B]\approx \frac{1}{T-1}\sum_{t=1}^T (r_t^B-\mu_B)^2.\]

In this case, the portfolio can be preferred if it is less risky compared to the benchmark, i.e., when \(\sigma_P^2<\sigma_B^2\) and when average returns are equal (or comparable).

Higher order moments of returns are sometimes used (skewness and kurtosis), but they are far less common. We refer for instance to C. R. Harvey et al. (2010) for one method that takes them into account in the portfolio construction process.

For some people, the volatility is an incomplete measure of risk. It can be argued that it should be decomposed into ‘good’ volatility (when prices go up) versus ‘bad’ volatility when they go down. The downward semi-variance is computed as the variance taken over the negative returns: \[\sigma^2_-\approx \frac{1}{\text{card}(r_t<0)}\sum_{t=1}^T (r_t-\mu_P)^21_{\{r_t<0\}}.\]

The average return and the volatility are the typical moment-based metrics used by practitioners. Other indicators rely on different aspects of the distribution of returns with a focus on tails and extreme events. The Value-at-Risk (VaR) is one such example. If \(F_r\) is the empirical cdf of returns, the VaR at a level of confidence \(\alpha\) (often taken to be 95%) is \[\text{VaR}_\alpha(\textbf{r}_t)=F_r(1-\alpha).\]

It is equal to the realization of a bad scenario (of return) that is expected to happen \((1-\alpha)\)% of the time on average. An even more conservative measure is the so-called Conditional Value at Risk (CVaR), also known as expected shortfall, which computes the average loss of the worst (\(1-\alpha\))% scenarios. Its empirical evaluation is \[\text{CVaR}_\alpha(\textbf{r}_t)=\frac{1}{\text{Card}(r_t < \text{VaR}_\alpha(\text{r}_t))}\sum_{r_t < \text{VaR}_\alpha(\text{r}_t)}r_t.\]

Going crescendo in the severity of risk measures, the ultimate evaluation of loss is the maximum drawdown. It is equal to the maximum loss suffered from the peak value of the strategy. If we write \(P_t\) for the time-\(t\) value of a portfolio, the drawdown is \[D_T^P=\underset{0 \le t \le T}{\text{max}} P_t-P_T ,\] and the maximum drawdown is \[MD_T^P=\underset{0 \le s \le T}{\text{max}} \left(\underset{0 \le t \le s}{\text{max}} P_t-P_s, 0\right) .\]

This quantity evaluates the greatest loss over the time frame \([0,T]\) and is thus the most conservative risk measure of all.

12.3.3 Factor-based evaluation

In the spirit of factor models, performance can also be assessed through the lens of exposures. If we recall the original formulation from Equation (3.1): \[r_{t,n}= \alpha_n+\sum_{k=1}^K\beta_{t,k,n}f_{t,k}+\epsilon_{t,n}, \]

then the estimated \(\hat{\alpha}_n\) is the performance that cannot be explained by the other factors. When returns are excess returns (over the risk-free rate) and when there is only one factor, the market factor, then this quantity is called Jensen’s alpha (Jensen (1968)). Often, it is simply referred to as alpha. The other estimate, \(\hat{\beta}_{t,M,n}\) (\(M\) for market), is the market beta.

Because of the rise of factor investing, it has become customary to also report the alpha of more exhaustive regressions. Adding the size and value premium (as in Fama and French (1993)) and even momentum (Carhart (1997)) helps understand if a strategy generates value beyond that which can be obtained through the usual factors.

12.3.4 Risk-adjusted measures

Now, the tradeoff between the average return and the volatility is a cornerstone in modern finance, since Markowitz (1952). The simplest way to synthesize both metrics is via the information ratio: \[IR(P,B)=\frac{\mu_{P-B}}{\sigma_{P-B}},\] where the index \(P-B\) implies that the mean and standard deviations are computed on the long-short portfolio with returns \(r_t^P-r_t^B\). The denominator \(\sigma_{P-B}\) is sometimes called the tracking error.

The most widespread information ratio is the Sharpe ratio (Sharpe (1966)) for which the benchmark is some riskless asset. Instead of directly computing the information ratio between two portfolios or strategies, it is often customary to compare their Sharpe ratios. Simple comparisons can benefit from statistical tests (see, e.g., Oliver Ledoit and Wolf (2008)).

More extreme risk measures can serve as denominator in risk-adjusted indicators. The Managed Account Report (MAR) ratio is, for example, computed as \[MAR^P = \frac{\tilde{\mu}_P}{MD^P},\] while the Treynor ratio is equal to \[\text{Treynor}=\frac{\mu_P}{\hat{\beta}_M},\] i.e., the (excess) return divided by the market beta (see Treynor (1965)). This definition was generalized to multifactor expositions by Hübner (2005) into the generalized Treynor ratio: \[\text{GT}=\mu_P\frac{\sum_{k=1}^K\bar{f}_k}{\sum_{k=1}^K\hat{\beta}_k\bar{f}_k},\] where the \(\bar{f}_k\) are the sample average of the factors \(f_{t,k}\). We refer to the original article for a detailed account of the analytical properties of this ratio.

12.3.5 Transaction costs and turnover

Updating portfolio composition is not free. In all generality, the total cost of one rebalancing at time \(t\) is proportional to \(C_t=\sum_{n=1}^N | \Delta w_{t,n}|c_{t,n}\), where \(\Delta w_{t,n}\) is the change in position for asset \(n\) and \(c_{t,n}\) the corresponding fee. This last quantity is often hard to predict, thus it is customary to use a proxy that depends for instance on market capitalization (large stocks have more liquid shares and thus require smaller fees) or bid-ask spreads (smaller spreads mean smaller fees).

As a first order approximation, it is often useful to compute the average turnover: \[\text{Turnover}=\frac{1}{T-1}\sum_{t=2}^T\sum_{n=1}^N|w_{t,n}-w_{t-,n}|,\] where \(w_{t,n}\) are the desired \(t\)-time weights in the portfolio and \(w_{t-,n}\) are the weights just before the rebalancing. The positions of the first period (launching weights) are exluded from the computation by convention. Transaction costs can then be proxied as a multiple of turnover (times some average or median cost in the cross-section of firms). This is a first order estimate of realized costs that does not take into consideration the evolution of the scale of the portfolio. Nonetheless, a rough figure is much better than none at all.

Once transaction costs (TCs) have been annualized, they can be deducted from average returns to yield a more realistic picture of profitability. In the same vein, the transaction cost-adjusted Sharpe ratio of a portfolio \(P\) is given by \[\begin{equation} \tag{12.4} SR_{TC}=\frac{\mu_P-TC}{\sigma_P}. \end{equation}\]

Transaction costs are often overlooked in academic articles but can have a sizable impact in real life trading (see, e.g., Novy-Marx and Velikov (2015)). DeMiguel et al. (2020) show how to use factor investing (and exposures) to combine and offset positions and reduce overall fees.

12.4 Common errors and issues

12.4.1 Forward looking data

One of the most common mistakes in portfolio backtesting is the use of forward looking data. It is for instance easy to fall in the trap of the danger zone depicted in Figure 12.2. In this case, the labels used at time \(t\) are computed with knowledge of what happens at times \(t+1\), \(t+2\), etc. It is worth triple checking every step in the code to make sure that strategies are not built on prescient data.

12.4.2 Backtest overfitting

The second major problem is backtest overfitting. The analogy with training set overfitting is easy to grasp. It is a well-known issue and was formalized for instance in White (2000) and Romano and Wolf (2005). In portfolio choice, we refer to Bajgrowicz and Scaillet (2012), D. H. Bailey and Prado (2014) and Lopez de Prado and Bailey (2020), and the references therein.

At any given moment, a backtest depends on only one particular dataset. Often, the result of the first backtest will not be satisfactory - for many possible reasons. Hence, it is tempting to have another try, when altering some parameters that were probably not optimal. This second test may be better, but not quite good enough - yet. Thus, in a third trial, a new weighting scheme can be tested, along with a new forecasting engine (more sophisticated). Iteratively, the backtester can only end up with a strategy that performs well enough, it is just a matter of time and trials.

One consequence of backtest overfitting is that it is illusory to hope for the same Sharpe ratios in live trading as those obtained in the backtest. Reasonable professionals divide the Sharpe ratio by two at least (C. R. Harvey and Liu (2015), Suhonen, Lennkh, and Perez (2017)). In D. H. Bailey and Prado (2014), the authors even propose a statistical test for Sharpe ratios, provided that some metrics of all tested strategies are stored in memory. The formula for deflated Sharpe ratios is: \[\begin{equation} \tag{12.5} t = \phi\left((SR-SR^*)\sqrt{\frac{T-1}{1-\gamma_3SR+\frac{\gamma_4-1}{4}SR^2}} \right), \end{equation}\] where \(SR\) is the Sharpe Ratio obtained by the best strategy among all that were tested, and \[SR^*=\mathbb{E}[SR]+\sqrt{\mathbb{V}[SR]}\left((1-\gamma)\phi^{-1}\left(1-\frac{1}{N}\right)+\gamma \phi^{-1}\left(1-\frac{1}{Ne}\right) \right),\] is the theoretical average maximum SR. Moreover,

-

\(T\) is the number of trading dates;

-

\(\gamma_3\) and \(\gamma_4\) are the \(skewness\) and \(kurtosis\) of the returns of the chosen (best) strategy;

-

\(\phi\) is the cdf of the standard Gaussian law and \(\gamma\approx 0,577\) is the Euler-Mascheroni constant;

- \(N\) refers to the number of strategy trials.

If \(t\) defined above is below a certain threshold (e.g., 0.95), then the \(SR\) cannot be deemed significant: compared to all of those that were tested. Most of the time, sadly, that is the case. In Equation (12.5), the realized SR must be above the theoretical maximum \(SR^*\) and the scaling factor must be sufficiently large to push the argument inside \(\phi\) close enough to two, so that \(t\) surpasses 0.95.

In the scientific community, test overfitting is also known as p-hacking. It is rather common in financial economics and the reading of C. R. Harvey (2017) is strongly advised to grasp the magnitude of the phenomenon. p-hacking is also present in most fields that use statistical tests (see, e.g., Head et al. (2015) to cite but one reference). There are several ways to cope with p-hacking:

- don’t rely on p-values (Amrhein, Greenland, and McShane (2019));

- use detection tools (Elliott, Kudrin, and Wuthrich (2019));

- or, finally, use advanced methods that process arrays of statistics (e.g., the Bayesianized versions of p-values to include some prior assessment from C. R. Harvey (2017), or other tests such as those proposed in Romano and Wolf (2005) and Simonsohn, Nelson, and Simmons (2014)).

The first option is wise, but the drawback is that the decision process is then left to another arbitrary yardstick.

12.4.3 Simple safeguards

As is mentioned at the beginning of the chapter, two common sense references for backtesting are Fabozzi and Prado (2018) and R. Arnott, Harvey, and Markowitz (2019). The pieces of advice provided in these two articles are often judicious and thoughtful.

One additional comment pertains to the output of the backtest. One simple, intuitive and widespread metric is the transaction cost-adjusted Sharpe ratio defined in Equation (12.4). In the backtest, let us call \(SR_{TC}^B\) the corresponding value for the benchmark, which we like to define as the equally-weighted portfolio of all assets in the trading universe (in our dataset, roughly one thousand US equities). If the \(SR_{TC}^P\) of the best strategy is above \(2\times SR_{TC}^B\), then there is probably a glitch somewhere in the backtest.

This criterion holds under two assumptions:

- a sufficiently long enough out-of-sample period and

- long-only portfolios.

It is unlikely that any realistic strategy can outperform a solid benchmark by a very wide margin over the long term. Being able to improve the benchmark’s annualized return by 150 basis points (with comparable volatility) is already a great achievement. Backtests that deliver returns more than 5% above those of the benchmark are dubious.

12.5 Implication of non-stationarity: forecasting is hard

This subsection is split into two parts: in the first, we discuss the reason that makes forecasting such a difficult task and in the second we present an important theoretical result originally developed towards machine learning but that sheds light on any discipline confronted with out-of-sample tests. An interesting contribution related to this topic is the study from Farmer, Schmidt, and Timmermann (2019). The authors assess the predictive fit of linear models through time: they show that the fit is strongly varying: sometimes the model performs very well, sometimes, not so much. There is no reason why this should not be the case for ML algorithms as well.

12.5.1 General comments

The careful reader must have noticed that throughout Chapters 5 to 11, the performance of ML engines is underwhelming. These disappointing results are there on purpose and highlight the crucial truth that machine learning is no panacea, no magic wand, no philosopher’s stone that can transform data into golden predictions. Most ML-based forecasts fail. This is in fact not only true for very enhanced and sophisticated techniques, but also for simpler econometric approaches (Dichtl et al. (2020)), which again underlines the need to replicate results to challenge their validity.

One reason for that is that datasets are full of noise and extracting the slightest amount of signal is a tough challenge (we recommend a careful reading of the introduction of Timmermann (2018) for more details on this topic). One rationale for that is the ever time-varying nature of factor analysis in the equity space. Some factors can perform very well during one year and then poorly the next year and these reversals can be costly in the context of fully automated data-based allocation processes.

In fact, this is one major difference with many fields for which ML has made huge advances. In image recognition, numbers will always have the same shape, and so will cats, buses, etc. Likewise, a verb will always be a verb and syntaxes in languages do not change. This invariance, though sometimes hard to grasp,25 is nonetheless key to the great improvement both in computer vision and natural language processing.

In factor investing, there does not seem to be such invariance (see Cornell (2020)). There is no factor and no (possibly nonlinear) combination of factors that can explain and accurately forecast returns over long periods of several decades.26 The academic literature has yet to find such a model; but even if it did, a simple arbitrage reasoning would logically invalidate its conclusions in future datasets.

12.5.2 The no free lunch theorem

We start by underlying that the no free lunch theorem in machine learning has nothing to do with the asset pricing condition with the same name (see, e.g., Delbaen and Schachermayer (1994), or, more recently, Cuchiero, Klein, and Teichmann (2016)). The original formulation was given by Wolpert (1992a) but we also recommend a look at the more recent reference Y.-C. Ho and Pepyne (2002). There are in fact several theorems and two of them can be found in Wolpert and Macready (1997).

The statement of the theorem is very abstract and requires some notational conventions. We assume that any training sample \(S=(\{\textbf{x}_1,y_1\}, \dots, \{\textbf{x}_I,y_I\})\) is such that there exists an oracle function \(f\) that perfectly maps the features to the labels: \(y_i=f(\textbf{x}_i)\). The oracle function \(f\) belongs to a very large set of functions \(\mathcal{F}\). In addition, we write \(\mathcal{H}\) for the set of functions to which the forecaster will resort to approximate \(f\). For instance, \(\mathcal{H}\) can be the space of feed-forward neural networks, or the space of decision trees, or the reunion of both. Elements of \(\mathcal{H}\) are written \(h\) and \(\mathbb{P}[h|S]\) stands for the (largely unknown) distribution of \(h\) knowing the sample \(S\). Similarly, \(\mathbb{P}[f|S]\) is the distribution of oracle functions knowing \(S\). Finally, the features have a given law, \(\mathbb{P}[\textbf{x}]\).

Let us now consider two models, say \(h_1\) and \(h_2\). The statement of the theorem is usually formulated with respect to a classification task. Knowing \(S\), the error when choosing \(h_k\) induced by samples outside of the training sample \(S\) can be quantified as: \[\begin{equation} \tag{12.6} E_k(S)= \int_{f,h}\int_{\textbf{x}\notin S} \underbrace{ (1-\delta(f(\textbf{x}),h_k(\textbf{x})))}_{\text{error term}} \underbrace{\mathbb{P}[f|S]\mathbb{P}[h|S]\mathbb{P}[\textbf{x}]}_{\text{distributional terms}}, \end{equation}\] where \(\delta(\cdot,\cdot)\) is the delta Kronecker function: \[\begin{equation} \tag{12.7} \delta(x,y)=\left\{\begin{array}{ll} 0 & \text{if } x\neq y \\ 1 & \text{if } x = y \end{array} .\right. \end{equation}\] One of the no free lunch theorems states that \(E_1(S)=E_2(S)\), that is, that with the sole knowledge of \(S\), there can be no superior algorithm, on average. In order to build a performing algorithm, the analyst or econometrician must have prior views on the structure of the relationship between \(y\) and \(\textbf{x}\) and integrate these views in the construction of the model. Unfortunately, this can also yield underperforming models if the views are incorrect.

12.6 First example: a complete backtest

We finally propose a full detailed example of one implementation of a ML-based strategy run on a careful backtest. What follows is a generalization of the content of Section 5.2.2. In the same spirit, we split the backtest in four parts:

- the creation/initialization of variables;

- the definition of the strategies in one main function;

- the backtesting loop itself;

- the performance indicators.

Accordingly, we start with initializations.

sep_oos <- as.Date("2007-01-01") # Starting point for backtest

ticks <- data_ml$stock_id %>% # List of all asset ids

as.factor() %>%

levels()

N <- length(ticks) # Max number of assets

t_oos <- returns$date[returns$date > sep_oos] %>% # Out-of-sample dates

unique() %>% # Remove duplicates

as.Date(origin = "1970-01-01") # Transform in date format

Tt <- length(t_oos) # Nb of dates, avoid T = TRUE

nb_port <- 2 # Nb of portfolios/stragegies

portf_weights <- array(0, dim = c(Tt, nb_port, N)) # Initialize portfolio weights

portf_returns <- matrix(0, nrow = Tt, ncol = nb_port) # Initialize portfolio returns This first step is crucial, it lays the groundwork for the core of the backtest. We consider only two strategies: one ML-based and the EW (1/N) benchmark. The main (weighting) function will consist of these two components, but we define the sophisticated one in a dedicated wrapper. The ML-based weights are derived from XGBoost predictions with 80 trees, a learning rate of 0.3 and a maximum tree depth of 4. This makes the model complex but not exceedingly so. Once the predictions are obtained, the weighting scheme is simple: it is an EW portfolio over the best half of the stocks (those with above median prediction).

In the function below, all parameters (e.g., the learning rate, eta or the number of trees nrounds) are hard-coded. They can easily be passed in arguments next to the data inputs. One very important detail is that in contrast to the rest of the book, the label is the 12-month future return. The main reason for this is rooted in the discussion from Section 4.6. Also, to speed up the computations, we remove the bulk of the distribution of the labels and keep only the top 20% and bottom 20%, as is advised in Coqueret and Guida (2020). The filtering levels could also be passed as arguments.

weights_xgb <- function(train_data, test_data, features){

train_features <- train_data %>% dplyr::select(features) %>% as.matrix() # Indep. variable

train_label <- train_data$R12M_Usd / exp(train_data$Vol1Y_Usd) # Dep. variable

ind <- which(train_label < quantile(train_label,0.2)| # Filter

train_label > quantile(train_label, 0.8))

train_features <- train_features[ind, ] # Filt'd features

train_label <- train_label[ind] # Filtered label

train_matrix <- xgb.DMatrix(data = train_features, label = train_label) # XGB format

fit <- train_matrix %>%

xgb.train(data = ., # Data source (pipe input)

eta = 0.3, # Learning rate

objective = "reg:squarederror", # Number of random trees

max_depth = 4, # Maximum depth of trees

nrounds = 80, # Number of trees used

verbose = 0 # No comments

)

xgb_test <- test_data %>% # Test sample => XGB format

dplyr::select(features) %>%

as.matrix() %>%

xgb.DMatrix()

pred <- predict(fit, xgb_test) # Single prediction

w <- pred > median(pred) # Keep only the 50% best predictions

w$weights <- w / sum(w)

w$names <- unique(test_data$stock_id)

return(w) # Best predictions, equally-weighted

}Compared to the structure proposed in Section 6.4.6, the differences are that the label is not only based on long-term returns, but it also relies on a volatility component. Even though the denominator in the label is the exponential quantile of the volatility, it seems fair to say that it is inspired by the Sharpe ratio and that the model seeks to explain and forecast a risk-adjusted return instead of a raw return. A stock with very low volatility will have its return unchanged in the label, while a stock with very high volatility will see its return divided by a factor close to three (exp(1)=2.718).

This function is then embedded in the global weighting function which only wraps two schemes: the EW benchmark and the ML-based policy.

portf_compo <- function(train_data, test_data, features, j){

if(j == 1){ # This is the benchmark

N <- test_data$stock_id %>% # Test data dictates allocation

factor() %>% nlevels()

w <- 1/N # EW portfolio

w$weights <- rep(w,N)

w$names <- unique(test_data$stock_id) # Asset names

return(w)

}

if(j == 2){ # This is the ML strategy.

return(weights_xgb(train_data, test_data, features))

}

}Equipped with this function, we can turn to the main backtesting loop. Given the fact that we use a large-scale model, the computation time for the loop is large (possibly a few hours on a slow machine with CPU). Resorting to functional programming can speed up the loop (see exercise at the end of the chapter). Also, a simple benchmark equally weighted portfolio can be coded with tidyverse functions only.

m_offset <- 12 # Offset in months for buffer period

train_size <- 5 # Size of training set in years

for(t in 1:(length(t_oos)-1)){ # Stop before last date: no fwd ret.!

if(t%%12==0){print(t_oos[t])} # Just checking the date status

train_data <- data_ml %>% filter(date < t_oos[t] - m_offset * 30, # Roll window w. buffer

date > t_oos[t] - m_offset * 30 - 365 * train_size)

test_data <- data_ml %>% filter(date == t_oos[t]) # Test sample

realized_returns <- test_data %>% # Computing returns via:

dplyr::select(R1M_Usd) # 1M holding period!

for(j in 1:nb_port){

temp_weights <- portf_compo(train_data, test_data, features, j) # Weights

ind <- match(temp_weights$names, ticks) %>% na.omit() # Index: test vs all

portf_weights[t,j,ind] <- temp_weights$weights # Allocate weights

portf_returns[t,j] <- sum(temp_weights$weights * realized_returns) # Compute returns

}

} ## [1] "2007-12-31"

## [1] "2008-12-31"

## [1] "2009-12-31"

## [1] "2010-12-31"

## [1] "2011-12-31"

## [1] "2012-12-31"

## [1] "2013-12-31"

## [1] "2014-12-31"

## [1] "2015-12-31"

## [1] "2016-12-31"

## [1] "2017-12-31"There are two important comments to be made on the above code. The first comment pertains to the two parameters that are defined in the first lines. They refer to the size of the training sample (5 years) and the length of the buffer period shown in Figure 12.2. This buffer period is imperative because the label is based on a long-term (12-month) return. This lag is compulsory to avoid any forward-looking bias in the backtest.

Below, we create a function that computes the turnover (variation in weights). It requires both the weight values as well as the returns of all assets because the weights just before a rebalancing depend on the weights assigned in the previous period, as well as on the returns of the assets that have altered these original weights during the holding period.

turnover <- function(weights, asset_returns, t_oos){

turn <- 0

for(t in 2:length(t_oos)){

realised_returns <- returns %>% filter(date == t_oos[t]) %>% dplyr::select(-date)

prior_weights <- weights[t-1,] * (1 + realised_returns) # Before rebalancing

turn <- turn + apply(abs(weights[t,] - prior_weights/sum(prior_weights)),1,sum)

}

return(turn/(length(t_oos)-1))

}Once turnover is defined, we embed it into a function that computes several key indicators.

perf_met <- function(portf_returns, weights, asset_returns, t_oos){

avg_ret <- mean(portf_returns, na.rm = T) # Arithmetic mean

vol <- sd(portf_returns, na.rm = T) # Volatility

Sharpe_ratio <- avg_ret / vol # Sharpe ratio

VaR_5 <- quantile(portf_returns, 0.05) # Value-at-risk

turn <- 0 # Initialisation of turnover

for(t in 2:dim(weights)[1]){

realized_returns <- asset_returns %>% filter(date == t_oos[t]) %>% dplyr::select(-date)

prior_weights <- weights[t-1,] * (1 + realized_returns)

turn <- turn + apply(abs(weights[t,] - prior_weights/sum(prior_weights)),1,sum)

}

turn <- turn/(length(t_oos)-1) # Average over time

met <- data.frame(avg_ret, vol, Sharpe_ratio, VaR_5, turn) # Aggregation of all of this

rownames(met) <- "metrics"

return(met)

}Lastly, we build a function that loops on the various strategies.

perf_met_multi <- function(portf_returns, weights, asset_returns, t_oos, strat_name){

J <- dim(weights)[2] # Number of strategies

met <- c() # Initialization of metrics

for(j in 1:J){ # One very ugly loop

temp_met <- perf_met(portf_returns[, j], weights[, j, ], asset_returns, t_oos)

met <- rbind(met, temp_met)

}

row.names(met) <- strat_name # Stores the name of the strat

return(met)

}Given the weights and returns of the portfolios, it remains to compute the returns of the assets to plug them in the aggregate metrics function.

asset_returns <- data_ml %>% # Compute return matrix: start from data

dplyr::select(date, stock_id, R1M_Usd) %>% # Keep 3 attributes

spread(key = stock_id, value = R1M_Usd) # Shape in matrix format

asset_returns[is.na(asset_returns)] <- 0 # Zero returns for missing points

met <- perf_met_multi(portf_returns = portf_returns, # Computes performance metrics

weights = portf_weights,

asset_returns = asset_returns,

t_oos = t_oos,

strat_name = c("EW", "XGB_SR"))

met # Displays perf metrics## avg_ret vol Sharpe_ratio VaR_5 turn

## EW 0.009697248 0.05642917 0.1718481 -0.07712509 0.0714512

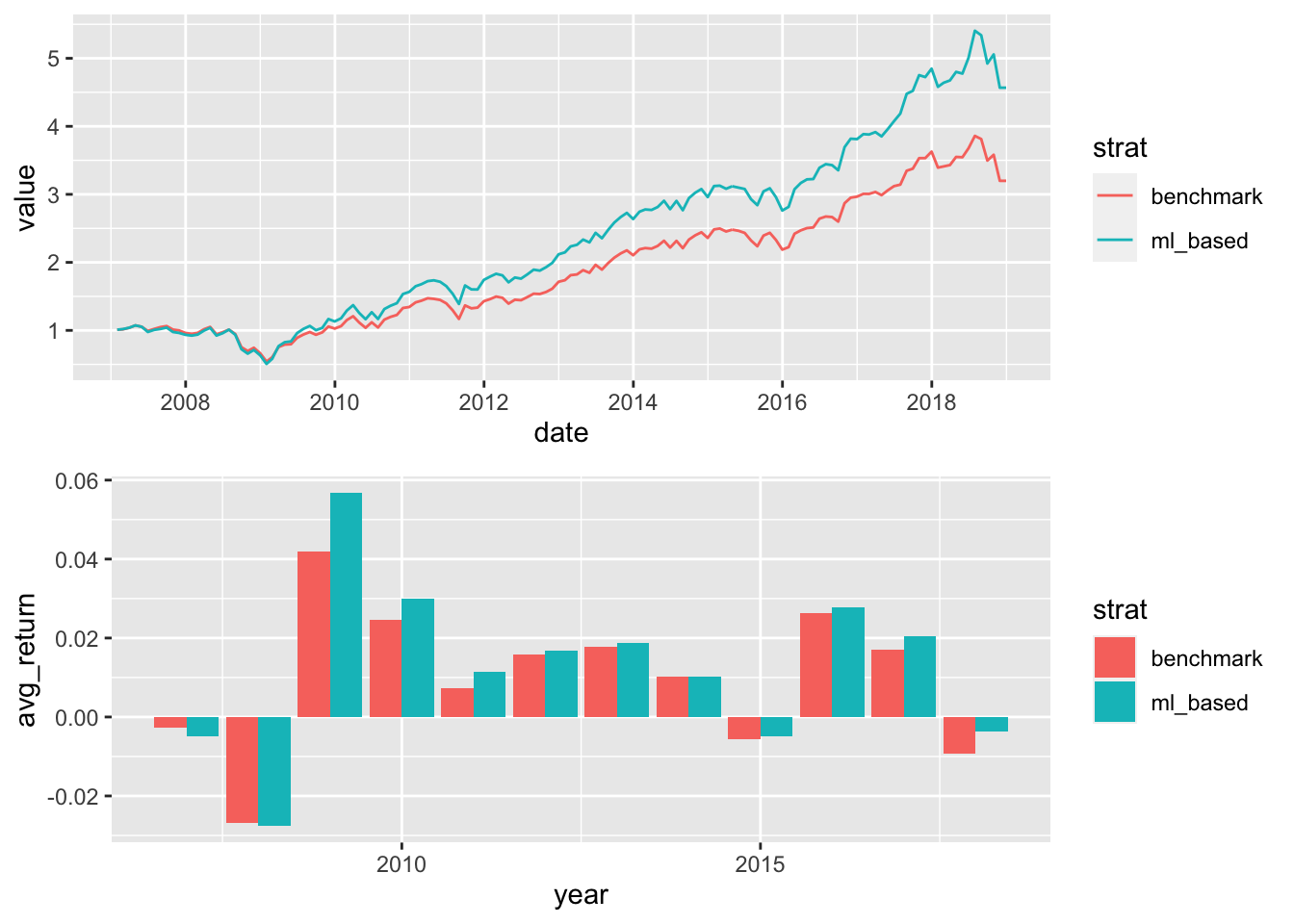

## XGB_SR 0.012602882 0.06376845 0.1976351 -0.08335864 0.5679932The ML-based strategy performs finally well! The gain is mostly obtained by the average return, while the volatility is higher than that of the benchmark. The net effect is that the Sharpe ratio is improved compared to the benchmark. The augmentation is not breathtaking, but (hence?) it seems reasonable. It is noteworthy to underline that turnover is substantially higher for the sophisticated strategy. Removing costs in the numerator (say, 0.005 times the turnover, as in Goto and Xu (2015), which is a conservative figure) only mildly reduces the superiority in Sharpe ratio of the ML-based strategy.

Finally, it is always tempting to plot the corresponding portfolio values and we display two related graphs in Figure 12.3.

library(lubridate) # Date management

library(cowplot) # Plot grid management

g1 <- tibble(date = t_oos,

benchmark = cumprod(1+portf_returns[,1]),

ml_based = cumprod(1+portf_returns[,2])) %>%

gather(key = strat, value = value, -date) %>%

ggplot(aes(x = date, y = value, color = strat)) + geom_line() +theme_grey()

g2 <- tibble(year = lubridate::year(t_oos),

benchmark = portf_returns[,1],

ml_based = portf_returns[,2]) %>%

gather(key = strat, value = value, -year) %>%

group_by(year, strat) %>%

summarise(avg_return = mean(value)) %>%

ggplot(aes(x = year, y = avg_return, fill = strat)) +

geom_col(position = "dodge") + theme_grey()

plot_grid(g1,g2, nrow = 2)

FIGURE 12.3: Graphical representation of the performance of the portfolios.

Out of the 12 years of the backtest, the advanced strategy outperforms the benchmark during 10 years. It is less hurtful in two of the four years of aggregate losses (2015 and 2018). This is a satisfactory improvement because the EW benchmark is tough to beat!

12.7 Second example: backtest overfitting

To end this chapter, we quantify the concepts of Section 12.4.2. First, we build a function that is able to generate performance metrics for simple strategies that can be evaluated in batches. The strategies are pure factor bets and depend on three inputs: the chosen characteristic (e.g., market capitalization), a threshold level (quantile of the characteristic) and a direction (long position in the top or bottom of the distribution).

strat <- function(data, feature, thresh, direction){

data_tmp <- dplyr::select(data, feature, date, R1M_Usd) # Data

colnames(data_tmp)[1] <- "feature" # Colname

data_tmp %>%

mutate(decision = direction * feature > direction * thresh) %>% # Investment decision

group_by(date) %>% # Date-by-date analysis

mutate(nb = sum(decision), # Nb assets in portfolio

w = decision / nb, # Weights of assets

return = w * R1M_Usd) %>% # Asset contribution

summarise(p_return = sum(return)) %>% # Portfolio return

summarise(avg = mean(p_return), sd = sd(p_return), SR = avg/sd) %>% # Perf. metrics

return()

}Then, we test the function on a triplet of arguments. We pick the price-to-book (Pb) ratio. The position is positive and the threshold is 0.3, which means that the strategy buys the stocks that have a Pb value above the 0.3 quantile of the distribution.

strat(data_ml, "Pb", 0.3, 1) # Large cap## # A tibble: 1 × 3

## avg sd SR

## <dbl> <dbl> <dbl>

## 1 0.0102 0.0496 0.207The output keeps three quantities that will be useful to compute the statistic (12.5). We must now generate these indicators for many strategies. We start by creating the grid of parameters.

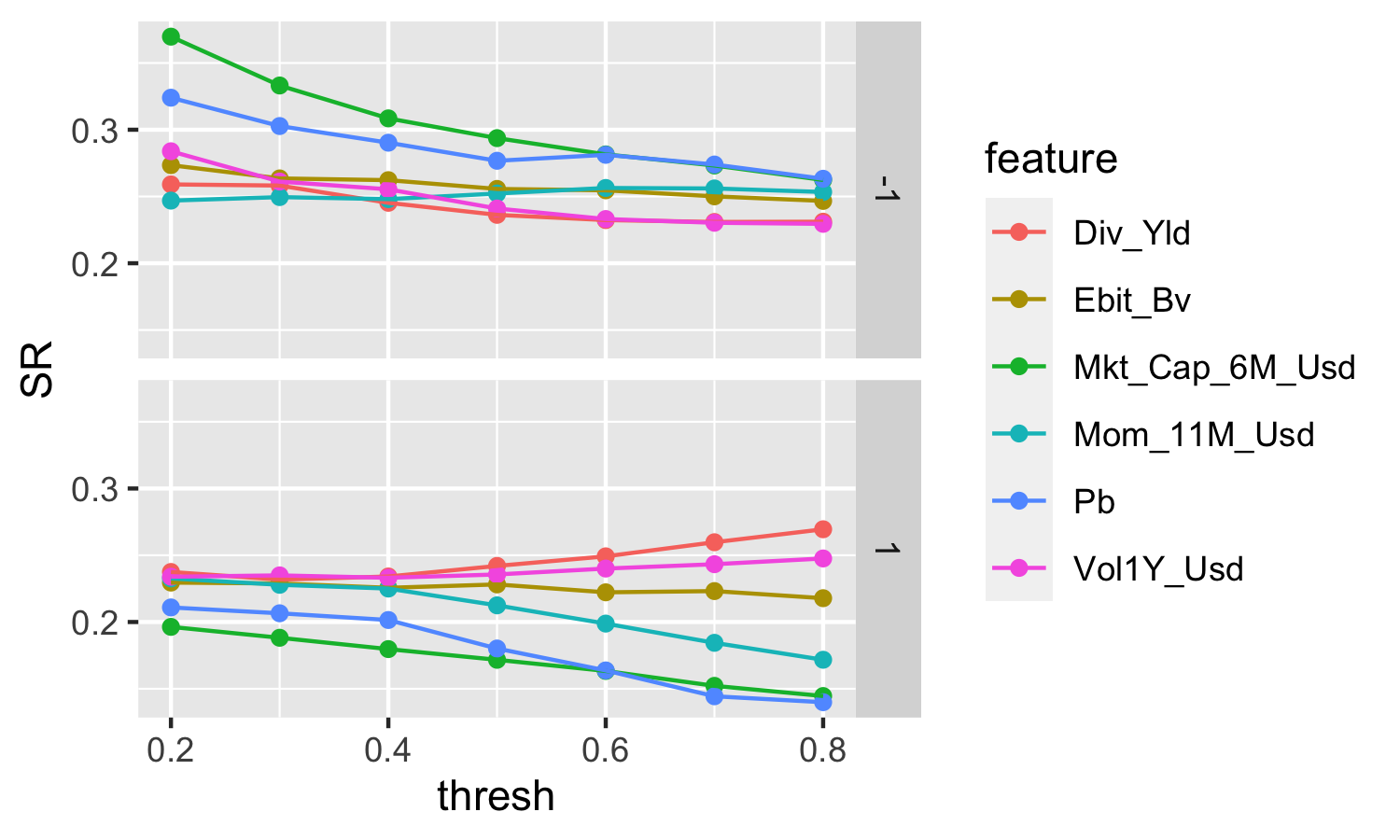

feature <- c("Div_Yld", "Ebit_Bv", "Mkt_Cap_6M_Usd", "Mom_11M_Usd", "Pb", "Vol1Y_Usd")

thresh <- seq(0.2,0.8, by = 0.1) # Threshold values values

direction <- c(1,-1) # Decision direction

pars <- expand.grid(feature, thresh, direction) # The grid

feature <- pars[,1] %>% as.character() # re-features

thresh <- pars[,2] # re-thresholds

direction <- pars[,3] # re-directionsThis makes 84 strategies in total. We can proceed to see how they fare. We plot the corresponding Sharpe ratios below in Figure 12.4. The top plot shows the strategies that invest in the bottoms of the distributions of characteristics while the bottom plot pertains to the portfolios that are long in the lower parts of these distributions.

grd <- pmap(list(feature, thresh, direction), # Parameters for the grid search

strat, # Function on which to apply the grid search

data = data_ml # Data source/input

) %>%

unlist() %>%

matrix(ncol = 3, byrow = T)

grd <- data.frame(feature, thresh, direction, grd) # Gather & reformat results

colnames(grd)[4:6] <- c("mean", "sd", "SR") # Change colnames

grd <- grd %>% mutate_at(vars(direction), as.factor) # Change type: factor (for plot)

grd %>% ggplot(aes(x = thresh, y = SR, color = feature)) + # Plot!

geom_point() + geom_line() + facet_grid(direction~.)

FIGURE 12.4: Sharpe ratios of all backtested strategies.

The last step is to compute the statistic (12.5). We code it here:

DSR <- function(SR, Tt, M, g3, g4, SR_m, SR_v){ # First, we build the function

gamma <- -digamma(1) # Euler-Mascheroni constant

SR_star <- SR_m + sqrt(SR_v)*((1-gamma)*qnorm(1-1/M) + gamma*qnorm(1-1/M/exp(1))) # SR*

num <- (SR-SR_star) * sqrt(Tt-1) # Numerator

den <- sqrt(1 - g3*SR + (g4-1)/4*SR^2) # Denominator

return(pnorm(num/den))

}All that remains to do is to evaluate the arguments of the function. The “best” strategy is the one on the top left corner of Figure 12.4 and it is based on market capitalization.

M <- nrow(pars) # Number of strategies we tested

SR <- max(grd$SR) # The SR we want to test

SR_m <- mean(grd$SR) # Average SR across all strategies

SR_v <- var(grd$SR) # Std dev of SR

# Below, we compute the returns of the strategy by recycling the code of the strat() function

data_tmp <- dplyr::select(data_ml, "Mkt_Cap_6M_Usd", date, R1M_Usd) # feature = Mkt_Cap

colnames(data_tmp)[1] <- "feature"

returns_DSR <- data_tmp %>%

mutate(decision = feature < 0.2) %>% # Investment decision: 0.2 is the best threshold

group_by(date) %>% # Date-by-date computations

mutate(nb = sum(decision), # Nb assets in portfolio

w = decision / nb, # Portfolio weights

return = w * R1M_Usd) %>% # Asset contribution to return

summarise(p_return = sum(return)) # Portfolio return

g3 <- skewness(returns_DSR$p_return) # Function from the e1071 package

g4 <- kurtosis(returns_DSR$p_return) + 3 # Function from the e1071 package

Tt <- nrow(returns_DSR) # Number of dates

DSR(SR, Tt, M, g3, g4, SR_m, SR_v) # The sought value!## [1] 0.6676416The value 0.6676416 is not high enough (it does not reach the 90% or 95% threshold) to make the strategy significantly superior to the other ones that were considered in the batch of tests.

12.8 Coding exercises

- Code the returns of the EW portfolio with tidyverse functions only (no loop).

- Code the advanced weighting function defined in Equation (12.3).

- Test it in a small backtest and check its sensitivity to the parameters.

- Using the functional programming package purrr, avoid the loop in the backtest.